AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

What does entropy mean11/18/2023

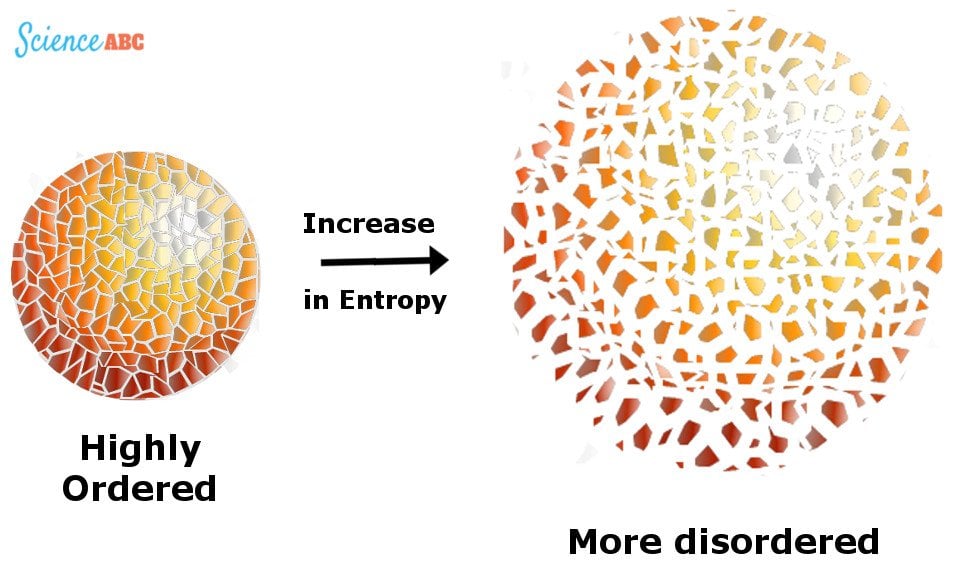

A more ordered state has less uncertainty and thus less entropy than a more disordered one. Entropy quantifies uncertainty and works as a measure of the disorder. In physics, entropy is a measure of how uncertain we are about the state of a system. Where q( x), which Jaynes called the "invariant measure", is proportional to the limiting density of discrete points.Entropy is a concept that frames the evolution of systems from order to disorder, and it permeates everything around us. Testable information is a statement about a probability distribution whose truth or falsity is well-defined. The principle of maximum entropy is useful explicitly only when applied to testable information. The selected distribution is the one that makes the least claim to being informed beyond the stated prior data, that is to say the one that admits the most ignorance beyond the stated prior data. In ordinary language, the principle of maximum entropy can be said to express a claim of epistemic modesty, or of maximum ignorance. However these statements do not imply that thermodynamical systems need not be shown to be ergodic to justify treatment as a statistical ensemble. Thus, the maximum entropy principle is not merely an alternative way to view the usual methods of inference of classical statistics, but represents a significant conceptual generalization of those methods. As a special case, a uniform prior probability density (Laplace's principle of indifference, sometimes called the principle of insufficient reason), may be adopted. The maximum entropy principle makes explicit our freedom in using different forms of prior data. The maximum entropy principle is also needed to guarantee the uniqueness and consistency of probability assignments obtained by different methods, statistical mechanics and logical inference in particular. The equivalence between conserved quantities and corresponding symmetry groups implies a similar equivalence for these two ways of specifying the testable information in the maximum entropy method. Another possibility is to prescribe some symmetries of the probability distribution. This is the way the maximum entropy principle is most often used in statistical thermodynamics. In most practical cases, the stated prior data or testable information is given by a set of conserved quantities (average values of some moment functions), associated with the probability distribution in question.

Consequently, statistical mechanics should be seen just as a particular application of a general tool of logical inference and information theory. He argued that the entropy of statistical mechanics and the information entropy of information theory are basically the same thing. In particular, Jaynes offered a new and very general rationale why the Gibbsian method of statistical mechanics works. Jaynes in two papers in 1957 where he emphasized a natural correspondence between statistical mechanics and information theory. According to this principle, the distribution with maximal information entropy is the best choice. Consider the set of all trial probability distributions that would encode the prior data. The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge about a system is the one with largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information).Īnother way of stating this: Take precisely stated prior data or testable information about a probability distribution function. Integrated nested Laplace approximations.

Posterior = Likelihood × Prior ÷ Evidence ( September 2008) ( Learn how and when to remove this template message) Please help to improve this article by introducing more precise citations. This article includes a list of general references, but it lacks sufficient corresponding inline citations.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed